Most enterprise agent pipelines do not fail in production.

They fail before they ever get there.

The failure is quiet. It hides behind demos that work. Behind proof-of-concepts that impress the right people in the right rooms. Behind roadmaps that promise scale and budgets that get approved.

Then the real environment arrives — real data, real load, real edge cases, real users who do exactly what the system was not designed for — and the pipeline collapses under its own complexity.

This is the orchestration trap. And the numbers confirm it is not rare.

Gartner projects that 40% of agentic AI projects will be canceled by 2027. Not because the models were bad. Because the systems could not be operationalized. MIT's research found that out of 1,837 enterprises surveyed, only 95 had AI agents running in actual production. McKinsey puts only 23% of enterprises in the "actually scaling" category. The rest — 39% — are stuck in experimentation, running pilots that look good in Confluence and go nowhere in production.

The common thread across every failure pattern I have seen is not the model. It is the orchestration layer that was never designed to survive contact with the real world.

What Orchestration Actually Means — and Why Most Teams Get It Wrong

When engineering teams talk about agent orchestration, they mean the layer that routes tasks between agents, manages state, and coordinates outputs. Most treat it as infrastructure. As plumbing. The boring part that connects the interesting parts.

That framing is where the trap begins.

Orchestration in a multi-agent system is not plumbing. It is the decision layer. It determines which agent gets invoked, in what order, with what context, under what conditions, and — critically — what happens when something goes wrong. Get the orchestration layer wrong and nothing else in your system matters. Not your model selection. Not your prompt design. Not how clean your retrieval pipeline is.

The orchestration layer is where most of the real architectural decisions live. And most teams build it as an afterthought.

Carnegie Mellon's benchmarks make this concrete: even the best frontier models complete only 30 to 35% of multi-step tasks autonomously when left to their own orchestration logic. The bottleneck is not model intelligence. It is the coordination and recovery logic around those models. That gap — between what the model can do in isolation and what the system delivers in a real workflow — is almost entirely an orchestration problem.

The Demo-to-Production Gap Is an Orchestration Problem

In 2025, enterprises invested $37 billion in AI — triple the year before. The demos were impressive. The results were mixed. The delta lives in one place: the gap between how systems behave under controlled conditions and how they behave under production conditions.

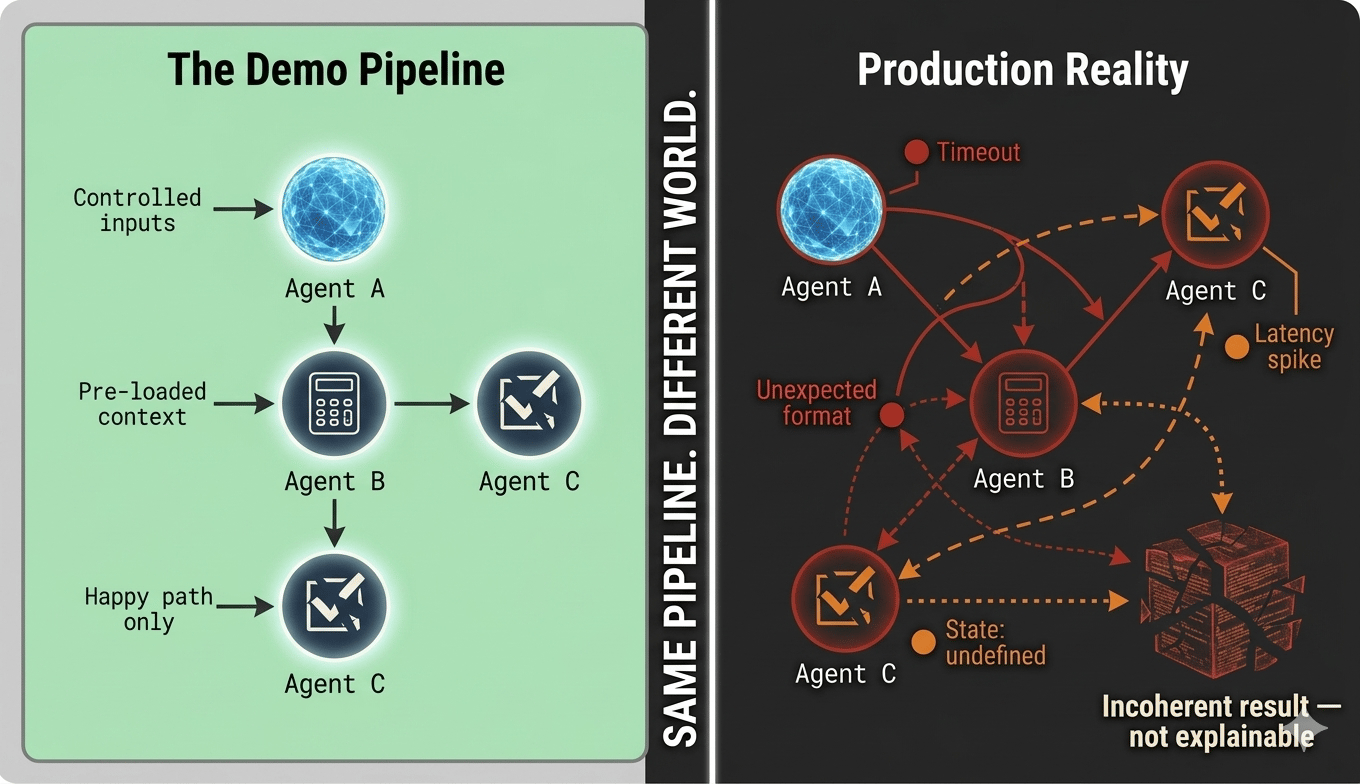

In a demo, you control the inputs. You have pre-loaded the right context. You have stress-tested the happy path until it runs clean. Every agent performs well because the conditions were engineered for performance.

In production, none of that holds. Users ask things the system was not designed for. External APIs return unexpected formats or time out at peak load. Upstream data schemas change without notice. An agent completing tasks in three seconds now takes thirty because a downstream dependency is slow. And the orchestration layer — built for the happy path — has no graceful path through any of it.

This is not a model problem. It is a design problem.

Specifically: it is a failure to design orchestration logic for degraded, unpredictable, adversarial conditions from the start. Every hour spent optimizing prompts before the orchestration layer is architected for production is hours spent on the wrong problem.

Three Patterns That Reliably Create the Trap

The orchestration trap does not appear randomly. It follows consistent, predictable patterns. Here are the three I encounter most in enterprise environments — and why each one fails.

Pattern 1: The sequential chain that cannot recover.

This is the most common structure. Agent A passes output to Agent B, which passes to Agent C. It works until one agent in the chain fails, times out, or returns output outside expected bounds. Because the pipeline was designed for the happy path, there is no recovery logic, no fallback, no re-routing. The entire pipeline stalls.

In production environments, partial failures are not edge cases. They are the baseline operating condition. Building a pipeline that can only handle success is not building a pipeline. It is building a demo.

Pattern 2: The orchestrator that knows too much.

Some teams overcorrect by building a central orchestrator with detailed, hardcoded knowledge of every downstream agent — their capabilities, expected inputs, exact response formats, and failure modes. This looks like thorough engineering. It is actually a brittleness trap.

Any time an agent changes — its model is updated, its API shifts, its response format evolves — the orchestrator breaks because it had encoded assumptions about the agent's internals. The coupling is invisible in architecture diagrams and devastating in production. Microsoft's own transition from AutoGen to the unified Agent Framework was partly driven by this exact problem: tight coupling making the system unmaintainable at scale.

Pattern 3: The stateless pipeline in a stateful world.

Enterprise tasks are not atomic. A contract review agent does not read one document in isolation — it reads that document in the context of prior versions, regulatory precedents, counterparty history, and live negotiation state. A clinical documentation agent does not write one note — it operates within a patient's full longitudinal record.

A stateless pipeline treats every invocation as isolated. When orchestration does not manage state explicitly — tracking what was done, what was returned, what changed, and in what order — the system produces results that are locally correct and globally incoherent. No recovery from failure is possible. No audit trail exists. No human reviewer can reconstruct what happened.

Lang Graph's rise as the dominant production orchestration framework is in large part because it treats state management as a first-class architectural concern, not an add-on. The teams that understood this early are the ones in production today.

A pipeline that only handles success is not a pipeline. It is a demo with a roadmap attached.

What Production-Grade Orchestration Actually Requires

Building orchestration that survives contact with production is not complicated in concept. It is rigorous in execution. There are four non-negotiable requirements.

Explicit failure handling at every node — not generic error catching.

This means designing the failure paths before the success paths. What happens when Agent B times out? What happens when Agent C returns a confidence score below threshold? What happens when the external API returns a 429? Each of these scenarios needs a defined resolution path. Most teams write catch-all error handlers and call it done. That is not failure handling. That is logging a crash.

Loose coupling between the orchestrator and its agents.

The orchestrator should know what capability it needs, not which specific agent provides it or how that agent works internally. This separation is what allows individual agents to be updated, swapped, or retired without cascading failures upstream. The Open AI Agents SDK's handoff pattern gets this right — agents delegate capability, not execution details. Teams that build to this principle make their systems maintainable. Teams that hardcode agent internals into their orchestrators build systems that work once and break forever.

Explicit state management as an architectural requirement.

Every invocation should produce a traceable record: what inputs were used, what state existed at invocation time, what the agent returned, and when. This is not just engineering hygiene. In regulated industries, it is the foundation of auditability. You cannot govern a system whose state you cannot inspect. You cannot explain a decision you cannot reconstruct.

Load-aware routing for high-throughput environments.

In enterprise-scale deployments, static routing logic fails. The orchestration layer needs to make routing decisions based on real-time system conditions — available agent capacity, current latency, active SLA obligations — not just task type. A workflow that processes 50,000 tasks per day cannot be routed the same way as a demo processing 20.

None of this is exotic. All of it is routinely deferred in the race from proof-of-concept to pilot. All of it becomes a crisis within six months of attempted production deployment.

The Governance Layer Nobody Accounts For

Here is the piece that enterprises in regulated industries almost always discover too late: orchestration is not just an engineering problem. It is a governance problem.

In FinTech, Healthcare, and Legal AI deployments, systems operate under regulatory obligations that require Explainability, auditability, and control. When a multi-agent pipeline produces a credit decision, a clinical recommendation, or a contract clause, someone needs to explain exactly how that output was produced. Which agents were invoked. What context they operated on. What intermediate outputs influenced the final result.

Of regulated enterprises surveyed in a 2025 industry study, 42% plan to introduce approval and review controls for AI agents — compared to only 16% of unregulated enterprises. Governance is not a nice-to-have in high-risk environments. It is an architectural requirement. And an orchestration layer without comprehensive logging, state tracking, and explainable routing cannot satisfy it.

The cost of retrofitting governance into an orchestration layer that was not designed for it is not just technical. It is architectural, organizational, and — in regulated industries — legal. The cost of building it in from the start is a fraction of that. Every production deployment I have seen that skipped this step eventually paid for it.

I covered the foundational thinking on context design that underlies effective agent architecture in Context Is the New Code — the orchestration layer is where every gap in context management compounds fastest and most visibly.

What Changes in the Next 18 to 36 Months

The tooling landscape is consolidating fast. LangGraph has emerged as the production orchestration leader. Microsoft unified AutoGen and Semantic Kernel into the Agent Framework, eliminating a fragmented choice that was causing real architectural problems. MCP has been adopted by OpenAI, Google, and Microsoft as the interoperability standard — which means agent-to-tool and agent-to-agent communication is beginning to standardize in ways that reduce bespoke wiring.

This consolidation will help. But tooling does not solve architectural thinking.

The next wave of enterprise AI failure will not be teams that chose the wrong model. It will be teams that built orchestration at demo-scale, scaled the user base, and could not understand why the system was collapsing. The pattern is already visible in the numbers. Gartner's 40% cancellation projection is not pessimism — it is a trend line drawn from current data.

Organizations that invest in orchestration architecture now — with failure handling, governance-grade state management, loose coupling, and load-aware routing as non-negotiable requirements — will be deploying reliably at scale when their competitors are still debugging pipelines that worked fine in the pilot.

The window to build this right is open. It will not stay open indefinitely.

The Bottom Line

Orchestration is not infrastructure. It is architecture.

The teams treating it as a detail to solve after the interesting AI work is done will find it becomes the only problem that matters — at the exact moment they can least afford to solve it.

Build the orchestration layer like the system depends on it.

Because it does.

Was this useful? Forward it to one person in your organization who is currently building an agent pipeline. It may save them six months.

New here? Every Tuesday, The Deployment Layer publishes one deep-dive on enterprise AI architecture, agent systems, and responsible AI governance. Subscribe free at thedeploymentlayer.com

Have a production failure story? I read every reply. Hit reply and tell me what your orchestration layer actually looked like when things went sideways.

Next Tuesday: The Evaluation Problem — Why You Cannot Trust Your AI System Until You Can Measure It. If this issue was about building right, next week is about knowing whether you did. Make sure you're subscribed → thedeploymentlayer.com

I am G, a senior AI leader focused on enterprise AI strategy, large language model architectures, and Responsible AI governance. I work with organizations to move beyond experimentation — operationalizing AI at scale while delivering measurable business impact through trusted, compliant, and well-governed systems.

Connect: LinkedIn | X | thedeploymentlayer.com